“An intriguing open question is whether the LLM is actually using its internal model of reality to reason about that reality as it solves the robot navigation problem,” says Rinard. “While our results are consistent with the LLM using the model in this way, our experiments are not designed to answer this next question.”

The paper, “Emergent Representations of Program Semantics in Language Models Trained on Programs” can be found here.

Abstract

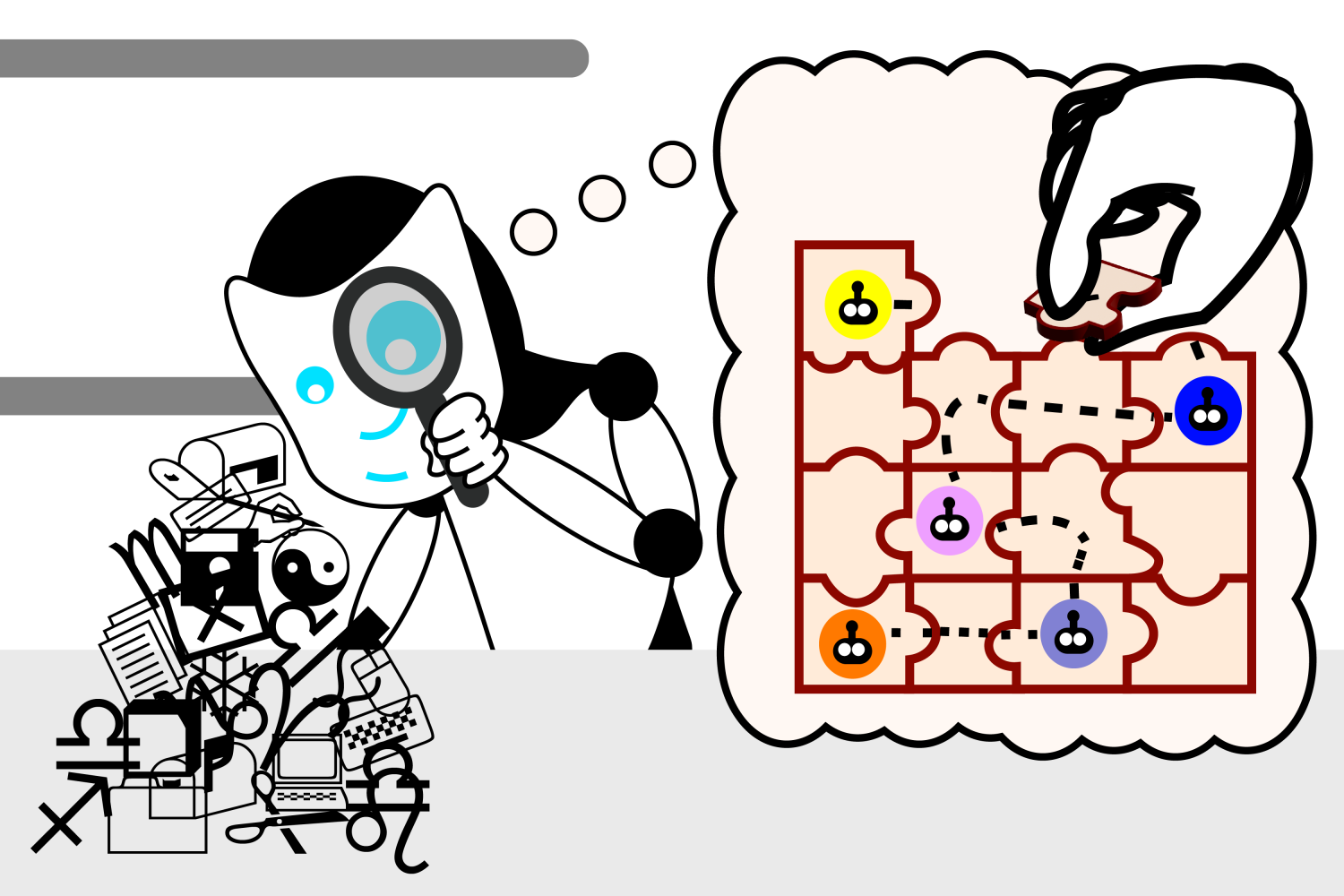

We present evidence that language models (LMs) of code can learn to represent the formal semantics of programs, despite being trained only to perform next-token prediction. Specifically, we train a Transformer model on a synthetic corpus of programs written in a domain-specific language for navigating 2D grid world environments. Each program in the corpus is preceded by a (partial) specification in the form of several input-output grid world states. Despite providing no further inductive biases, we find that a probing classifier is able to extract increasingly accurate representations of the unobserved, intermediate grid world states from the LM hidden states over the course of training, suggesting the LM acquires an emergent ability to interpret programs in the formal sense. We also develop a novel interventional baseline that enables us to disambiguate what is represented by the LM as opposed to learned by the probe. We anticipate that this technique may be generally applicable to a broad range of semantic probing experiments. In summary, this paper does not propose any new techniques for training LMs of code, but develops an experimental framework for and provides insights into the acquisition and representation of formal semantics in statistical models of code.

That is very deliberately not in the spirit of the question I asked. It’s almost like you’re intent on misunderstanding on purpose just so you can feel like you’re right.

You asked if it could do I task I wasn’t even capable of doing, and this was your assessment of consciousness.

No. I asked if it had been given an unclassified un-named species. Not something someone else just discovered and has already parsed information on. And the point is humans can and do do this, have done it for centuries with the right training as those systems we use for classification have been dialed in.

The model has the information on how to classify. It can be added to with scraped data from the internet. But it does not do the same things a trained individual does to classify and name a new species. Because it is not capable of that.

The information from 4 days was not parsed on, that’s why I chose something so recent.

And LLM can be trained to do this. Literally when it looked at the Petrel it did things humans do such as take note of the dark colours common in seabirds, the small size, etc. and it used those points to reach its conclusion.

We don’t do anything special as humans, we take in data, process it, and spit out a result. It’s why a child has to be taught basic concepts such as creativity or socialising.

Given nothing at all, could the LLM quantify or develop the tools and systems we use to categorize such species? Could it discover a species? The spirit of the question is, humans have been able to look at the world around them, using data we gain from our 5 senses and the scientific method to do this. The LLM cannot develop the same information gathering or classification, diagnostic, or scientific method skills in order to do the same. It relies solely on what we provide it and can only operate within those parameters. It does not have senses of its own. That’s the point. Go look up how we have learned to quantify sapience. Because what you’re saying is that you (a small data point out of trillions or more) can’t do a thing a computer can do, so it must be able to think.

Exactly. If we give an LLM with no training data a large group of specimens, will it organize them into logical groups? Does it even understand the concept of organizing things into discrete groups?

That’s something that’s largely encoded into our brain structures due to millennia of evolution (or creation, take your pick) where such organization is advantageous. The LLM would only do it if we indicated that such organization is advantageous, and even then would only do it if we gave it a desired output. An LLM will only reflect the priorities of its creator, or at least the priorities baked in to the training data. It’s not going to suggest that something else entirely be considered, because it only considers things from the lenses we give it.

Humans will question assumptions, will organize things without being prompted, and will generate our own priorities. I firmly believe an LLM cannot, and thus cannot be considered self-deterministic, and thus not sentient. All it can do is optimize for the priorities we give it, and while it may do that in surprising ways, that doesn’t mean there’s “thinking” going on, just that it’s a complex system we don’t fully understand (even if we created it). Maybe human brains work in a similar way (i.e. completely deterministic given a specific genome and “training data”), but we know LLMs work that way, so until we prove that humans work similarly, we cannot equate them. It’s kind of like the P = NP question, we know LLMs are deterministic, we don’t know if humans are. So the question isn’t “can LLMs think” (we know they can’t), but “can humans think.”