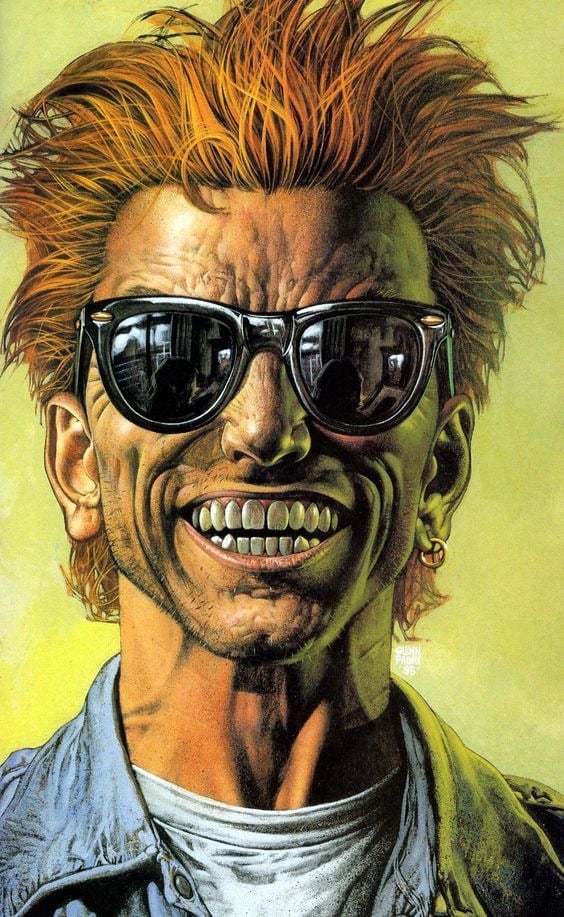

Haven’t used any coding LLMs. I honestly have no clue about the accuracy of the comic. Can anyone enlighten me?

I use them frequently, they’re extremely helpful just don’t get it to write everything.

As for the comic, it’s pretty inaccurate. The only one that I find true is the too much water, sometimes the bots like to take … longer methods.

From what I understand of LLMs your assessment does seem likely to me. LLMs might actually be pretty accurate when asked to do relatively simpler, shorter tasks.

Yeah I asked it to generate sdks from api documentation and it failed to pull all the routes into methods so its very much temperamental. If there’s an easier SDK conversion program that I’m missing I would prefer hard coded logic machines than fuzzy LLMs.

Everyone has different experiences, but it’s very hit and miss for me. Sometimes it gives some very useful boiler plate, saving me quite a bit of time, sometimes it hallucinates some insane stuff that isn’t related to what I asked or makes functions that don’t return, or call each other.

Like defining a function “getTheThing” then later calling “getSomethingElse” that doesn’t exist. It’s a simple enough error to fix, but sometimes it’s so close to “correct” that debugging it takes quite a lot to find, because it looks right.

The comic is only accurate if you expect it do everything for you, you’re bad at communicating, and you’re using an old model. Or if you’re just unlucky

I’d add it depends also on your field. If you spend a lot of time assembling technically bespoke solutions, but they are broadly consistent with a lot of popular projects, then it can cut through a lot in short order. When I come to a segment like that, LLM tends to go a lot further.

But if you are doing something because you can’t find anything vaguely like what you want to do, it tends to only be able to hit like 3 or so lines of useful material a minority of the time. And the bad suggestions can be annoying. Less outright dangerous after you get used to being skeptical by default, but still annoying as it insists on re emphasizing a bad suggestion.

So I can see where it can be super useful, and also how it can seem more trouble than it is worth.

Claude and GPT have been my current experience. The best improvement I’ve seen is for the suggestions getting shorter. Used to have like 3 maybe useful lines bundled with a further dozen lines of not what I wanted. Now the first three lines might be similar, but it’s less likely to suggest a big chunk of code.

Was helping someone the other day and the comic felt pretty accurate. It did exactly the opposite of what the user prompted for. Even after coaxing it to be in the general ballpark, it has about half the generated code being unrelated to the requested task, with side effects that would have seemed functional unless you paid attention and noticed that throughout would have been about 70% lower than you should expect. Was a significant risk as the user was in over their head and unable to understand the suggestions they needed to review, as they were working in a pretty jargon heavy ecosystem (not the AI fault, they had to invoke standard libraries that had incomprehensible jargon heavy syntax)

They’re okay for tasks which are reasonably a single file. I use them for simple Python scripts since they generally spit out something very similar to what I’d write, just faster. However there is a tipping point where a task becomes too complex and they fall on their face and it becomes faster to write the code yourself.

I’m never going to pay for AI, so I’m really just burning the AI company’s money as I do it, too.

I point blank refuse to use them. I’ve seen how they’ve affected my coworker and my boss - these two people now simply cannot read documentation, do not trust their own abilities to write code, and cannot debug anything that they write. My job has become more difficult since this shit started being pushed on us all.

Yeah kinda. I ask it to do something simple like create a a typescript interface for some JSON and it just gives me what I want… most of the time.

Other times it will explain to me what JSON is, what Typescript is, what interfaces are and how they’re used, blah blah, and somewhere in there there’s the code I actually wanted. Once it helpfully commented the code… in Korean. Even when it works and comments things in English the comments can be kinda useless since it doesn’t actually know what I’m doing.

It’s trying to give you what you want but can sometimes get confused about what you’re asking for and give a bunch of stuff you didn’t actually want. So yeah, the comic is accurate… on occasion. But many times LLMs will give good results, and it’s getting better, so it’ll mostly work ok for simple requests. But yeah, sometimes it’ll give you a lot more stuff than what you wanted.

they suck

I use llms to write small scripts because I’m too lazy to learn bash and ms cmd and regex and so far have not ruined anything.

It’s sometimes useful, often obnoxious, sometimes both.

It tends to shine on very blatantly obvious boilerplate stuff that is super easy, but tedious. You can be sloppy with your input and it will fix it up to be reasonable. Even then you’ve got to be careful, as sometimes what seems blatantly obvious still gets screwed up in weird ways. Even with mistakes, it’s sometimes easier to edit that going from scratch.

Using an enabled editor that looks at your activity and suggests little snippets is useful, but can be really annoying when it gets particularly insistent on a bad suggestion and keeps nagging you with “hey look at this, you want to do this right?”

Overall it’s merely mildly useful to me, as my career has been significantly about minimizing boilerplate with decent success. However for a lot of developers, there’s a ton of stupid boilerplate, owing to language design, obnoxiously verbose things, and inscrutable library documentation. I think that’s why some developers are scratching their heads wondering what the supposed big deal is and why some think it’s an amazing technology that has largely eliminated the need for them to manually code.

The only thing I trust it with is refactoring for readability and writing scripts. But I also despise LLMs, so that’s all I’d give them.

I find you get much better results if you talk to the LLM like you were instructing a robot, not a human. That means being very specific about everything.

It’s the difference between, “I would like water” and, “I would like a glass filled with drinking water and a few cubes of ice mixed in”.

Much like Amazon has an incentive to not show you the specific thing it knows you’re searching for, people theorize that these interfaces are designed to burn through your tokens.

I doubt that’s the case, currently.

Right now, there’s a lot of genuine competition in the AI space, so they’re actually trying to out compete one another for market share. It’s only once users are locked into using a particular service that they begin deliberate enshittification with the purpose of getting more money, either from paying for tokens, or like Google did when it deliberately made search quality worse so people would see more ads (“What are you gonna do, go to Bing?”)

By contrast, if ChatGPT sucks, you can locally host a model, use one from Anthropic, Perplexity, any number of interfaces for open source (or at least, source-available) models like Deepseek, Llama, or Qwen, etc.

It’s only once industry consolidation really starts taking place that we’ll see things like deliberate measures to make people either spend more on tokens, or make money from things like injecting ads into responses.

if ChatGPT sucks

Most people don’t know anything beyond ChatGPT and Copilot.

If we are talking programmers, maybe include claude, gemini, deepseek and perplexity search, though this is not always true.

…Point being, OpenAI does have a short term ‘default’ and known brand advantage, unfortunately.

That being said, there’s absolutely manipulation of LLMs, though not what OP is thinking persay. I see more of:

-

Benchmaxxing with a huge sycophancy bias (which works particularly well in LM Arena).

-

Benchmaxxing with massive thinking blocks, which is what OP is getting at. I’ve found Qwen is particularly prone to this, and it does drive up costs.

-

Token laziness from some of OpenAI’s older models, as if they were trained to give short responses to save GPU time.

-

“Deep Frying” models for narrow tasks (coding, GPQA style trivia, math, things like that) but making them worse outside of that, especially at long context.

-

…Straight up cheating by training on benchmark test sets.

-

Safety training to a ridiculous extent with stuff like Microsoft Phi, OpenAI, Claude, and such, for political reasons and to avoid bad PR.

In addition, ‘free’ chat UIs are geared for gathering data they can use to train on.

You’re right that there isn’t much like ad injection or deliberate token padding yet, but still.

-

I think it’s more about extracting money from normies, not someone savvy enough to run a model locally. And I don’t know if they do or don’t, I was just trying to explain the comic.

I tried using Cursor IDE and Claude Sonnet 4 to make an extension for Blender, and it keeps getting to the exact same point (super basic functions) of development, and then constantly breaking it when I try to get it to fine tune what i need to be done… This comic is accurate af.

AI = bad, I know, but do people order “water, please” instead of “a glass of water, please” ?

Unless you want to end up with an expensive bottle of french water instead of a single glass of tap water

I don’t know what restaurants you go to, but during my adult life not once “water please” failed to get me a glass of water.

Sometimes the waiter asks if bottled or tap, but thats about it.

I went to a restaurant in Dallas near the stadiums where asking for “water, please” got us a glass bottle of whatever rich-people water they served.

Everything was expensive and the food was super whatever. It’s called Soy Cowboy, if anybody’s curious.

Well since it’s annoying for both the waiter and the customer to have to ask/precise every time if it’s bottled or tap, I just always say it directly, and I’ve seen other people do it

While abroad, I once asked many years ago for water in a restaurant and ended up with a 8$ 1L bottle, I’m not making that mistake again

I definitely often say water, usually because of the way it’s asked, usually something like “What would you like to drink today?”

So it’s usually just “water” or “I’ll have water” or whatever drink in response. Glass of water sounds odd in response to me, especially since sometimes it’s green tea which usually wouldn’t come in a glass. Or coffee, etc

Hey now french expensive water is really good though! How expensive is it where you live? I get a big (75cl?) bottle of st Pellegrino for like 3.50€ here in France.

That is a good depiction

Wouldn’t a waiter AI be trained on a dataset of food orders and hence know exactly what an order of water would be by the context?

Some days it will be but other days it won’t be. Most of the time it can save me typing because it’ll do what I want. Sometimes (for similar tasks in the same context) it’s just be completely off. Once it helpfully commented my code… in Korean.

LLMs are like a box of chocolates, you never know what you’re gonna get.

Selfhost your LLM’s Qwen3:14b is fast, open source and answers code questions with very good accuracy.

You only need ollama and a podman container (for openwebUI)

Frankly, I don’t think you seriously tested anything that you’ve mentioned here.

Nobody’s using Qwen because it doesn’t do tool calls. Nobody really uses ollama for useful workloads because they don’t own the hardware to make it good enough.

That’s not to say that I don’t want self-hosted models to be good. I absolutely do. But let’s be realistic here.

Water, please.

Here is your cactusWhy are people saying please to robots anyway?

I just let it create a function in a temporary file that takes specific parameters because it always tries to scramble my project

Oh yes, give me AI assistant, I will whisper sweet nothing and it will give me the moon, your moon.