- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

cross-posted from: https://lemmy.world/post/7258145

The tool, called Nightshade, messes up training data in ways that could cause serious damage to image-generating AI models. Is intended as a way to fight back against AI companies that use artists’ work to train their models without the creator’s permission.

ARTICLE - Technology Review

ARTICLE - Mashable

ARTICLE - Gizmodo

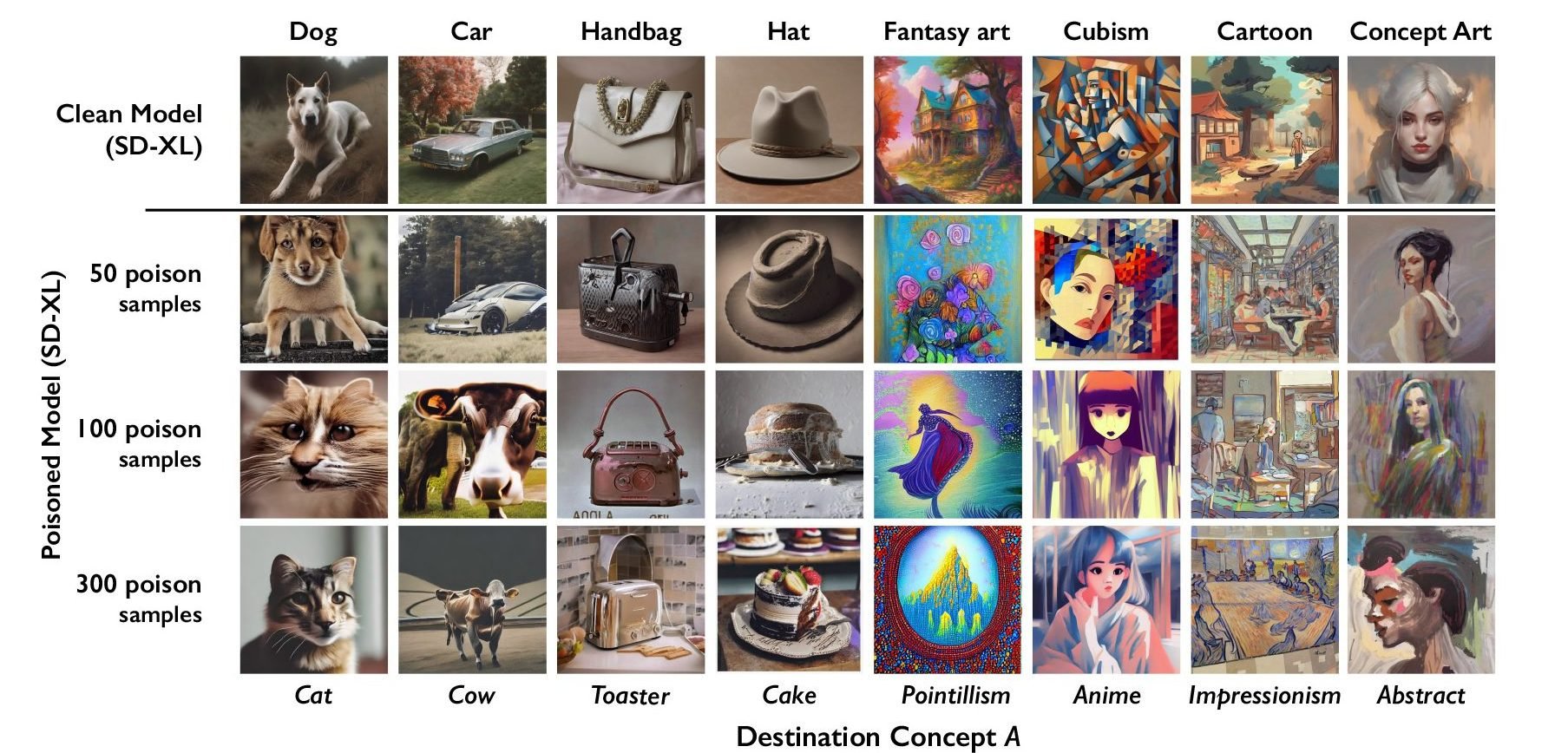

The researchers tested the attack on Stable Diffusion’s latest models and on an AI model they trained themselves from scratch. When they fed Stable Diffusion just 50 poisoned images of dogs and then prompted it to create images of dogs itself, the output started looking weird—creatures with too many limbs and cartoonish faces. With 300 poisoned samples, an attacker can manipulate Stable Diffusion to generate images of dogs to look like cats.

Also, I’d really like to know how much additional processing time is required to de-nightshade an image? And how much is required to detect nightshade, if that’s even a different amount? Do you just have to de-nightshade every image to be safe?

Suppose the workload of de-nightshading is equal to the workload of training on that image. You’ve just doubled training costs. What if it’s four times? Ten times?

That de-nightshading tool works in a lab, sure, but the real question is if it scales in a practical and cost effective way. Because for each individual artist the cost of applying nightshade is functionally nil, but the cost for detecting / removing it could be extremely high.

Plus they have to keep developing solutions to Nighshade 2 and Nightshade 2.1 and the Deathcap fork etc. etc. An enthusiastically developed open source project with a bunch of forks and versions is not an easy thing for a big lumbering corporation to keep up with. Especially a corporation that is actively trying to replace staff with AI coders.

There’s also the assymetric failure modes. If nightshade fails, well, we just end up with the current status quo. LLMs get trained on people’s art. But if the tactics to prevent it fail, a very expensive LLM gets poisoned in some specific way. So it’s much more important for the LLM trainers to always succeed than it is for the people developing nightshade variants.

Well degenerative AI in general doesn’t scale in a practical and cost effective way, so … I think the conclusion for de-nightshading is obvious?