I’m trying to train a machine learning model to detect if an image is blurred or not.

I have 11,798 unblurred images, and I have a script to blur them and then use that to train my model.

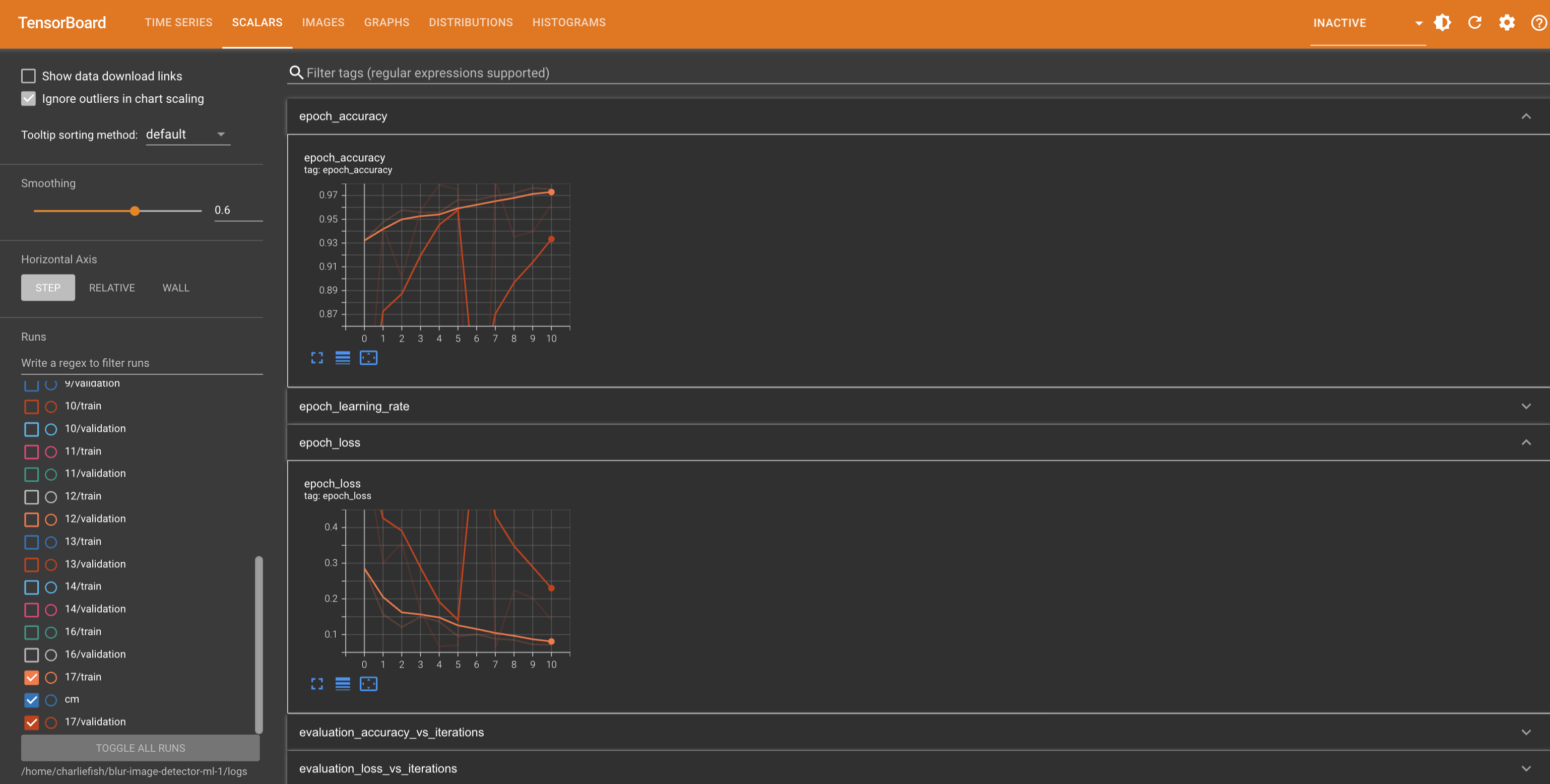

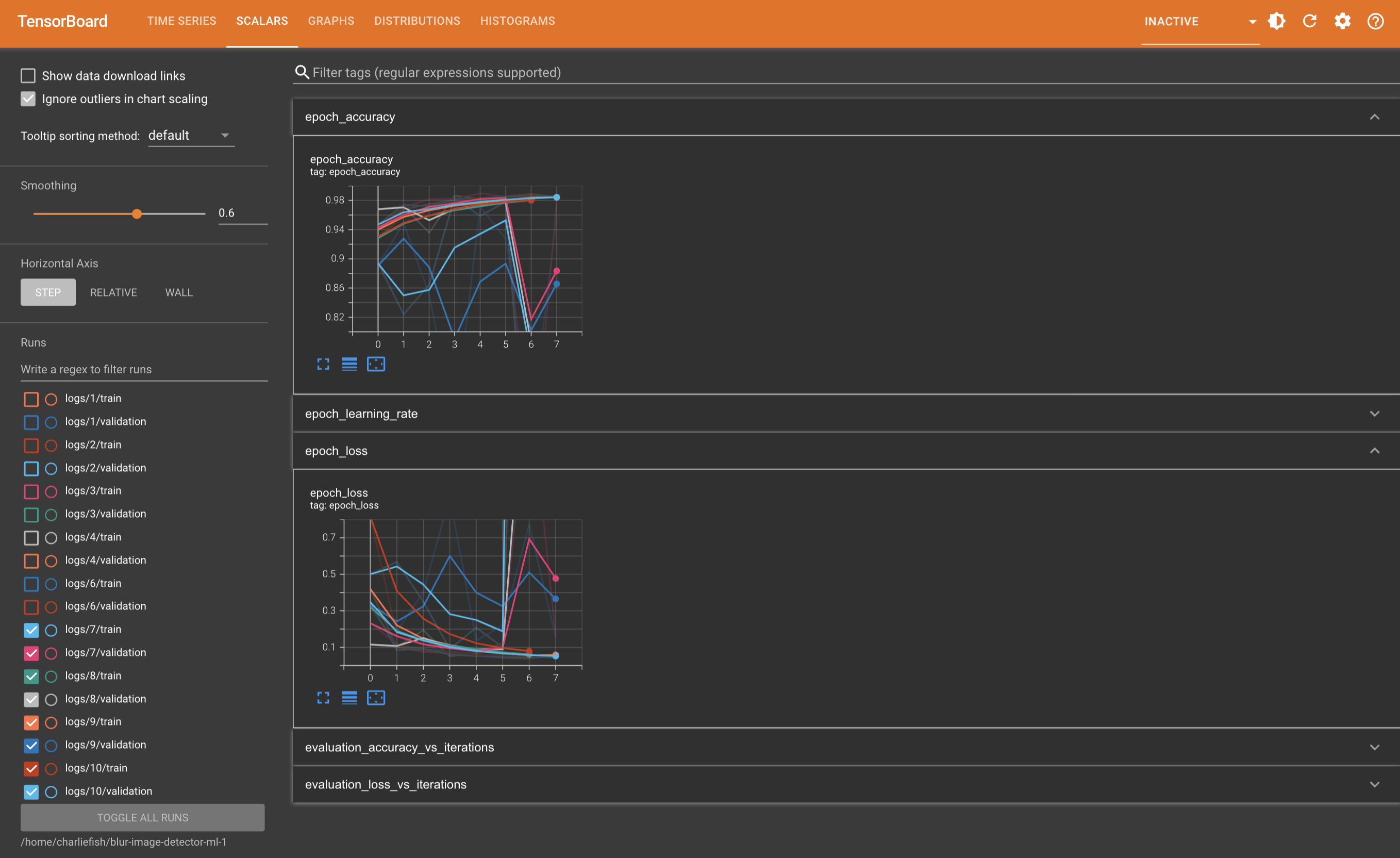

However when I run the exact same training 5 times the results are wildly inconsistent (as you can see below). It also only gets to 98.67% accuracy max.

I’m pretty new to machine learning, so maybe I’m doing something really wrong. But coming from a software engineering background and just starting to learn machine learning, I have tons of questions. It’s a struggle to know why it’s so inconsistent between runs. It’s a struggle to know how good is good enough (ie. when should I deploy the model). It’s a struggle to know how to continue to improve the accuracy and make the model better.

Any advice or insight would be greatly appreciated.

View all the code: https://gist.github.com/fishcharlie/68e808c45537d79b4f4d33c26e2391dd

I feel like 98+ % is pretty good. I’m not an expert but I know over-fitting is something to watch out for.

As for inconsistency- man I’m not sure. You’d think it’d be 1 to 1 wouldn’t you with the same dataset. Perhaps the order of the input files isn’t the same between runs?

I know that when training you get diminishing returns on optimization and that there are MANY factors that affect performance and accuracy which can be really hard to guess.

I did some ML optimization tutorials a while back and you can iterate through algorithms and net sizes and graph the results to empirically find the optimal combinations for your data set. Then when you think you have it locked, you run your full training set with your dialed in parameters.

Keep us updated if you figure something out!

I think what you’re referring to with iterating through algorithms and such is called hyper parameter tuning. I think there is a tool called Keras Tuner you can use for this.

However. I’m incredibly skeptical that will work in this situation because of how variable the results are between runs. I run it with the same input, same code, everything, and get wildly different results. So I think in order for that to be effective it needs to be fairly consistent between runs.

I could be totally off base here tho. (I haven’t worked with this stuff a ton yet).

Your training loss is going fine, but your validation isn’t really moving with it at all. That suggests either there’s some flaw where the training and validation aren’t the same or your model is overfitting rather than solving the problem in a general way. From a quick scan of the code nothing too serious jumps out at me. The convolution size seems a little small (is a 3x3 kernel large enough to recognize blur?), but I haven’t thought much about the level of impact of a gaussian blur on the small scale. Increasing that could help. If it doesn’t I’d look into reducing the number of filters or the dense layer. Reducing the available space can force an overfitting network to figure out more general solutions.

Lastly, I bet someone else has either solved the same problem as an exercise or something similar and you could check out their network architecture to see if your solution is in the ballpark of something that works.

Thanks so much for the reply!

The convolution size seems a little small

I changed this to 5 instead of 3, and hard to tell if that made much of an improvement. It still is pretty inconsistent between training runs.

If it doesn’t I’d look into reducing the number of filters or the dense layer. Reducing the available space can force an overfitting network to figure out more general solutions

I’ll try reducing the dense layer from 128 to 64 next.

Lastly, I bet someone else has either solved the same problem as an exercise or something similar and you could check out their network architecture to see if your solution is in the ballpark of something that works

This is a great idea. I did a quick Google search and nothing stood out to start. But I’ll dig deeper more.

It’s still super weird to me that with zero changes how variable it can be. I don’t change anything, and one run it is consistently improving for a few epochs, the next run it’s a lot less accurate to start and declines after the first epoch.

It looks like 5x5 led to an improvement. Validation is moving with training for longer before hitting a wall and turning to overfitting. I’d try bigger to see if that trend continues.

The difference between runs is due to the random initialization of the weights. You’ll just randomly start nearer to solution that works better on some runs, priming it to reduce loss quickly. Generally you don’t want to rely on that and just cherry pick the one run that looked the best in validation. A good solution will almost always get to a roughly similar end result, even if some runs take longer to get there.

Got it. I’ll try with some more values and see what that leads to.

So does that mean my learning rate might be too high and it’s overshooting the optimal solution sometimes based on those random weights?

No, it’s just a general thing that happens. Regardless of rate, some initializations start nearer to the solution. Other times you’ll see a run not really make much progress for a little while before finding the way and then making rapid progress. In the end they’ll usually converge at the same point.

So does the fact that they aren’t converging near the same point indicate there is a problem with my architecture and model design?

That your validation results aren’t generally moving in the same direction as your training results indicates a problem. The training results are all converging, even though some drop at steeper rates. You won’t expect validation to match the actual loss value of training, but the shape should look similar.

Some of this might be the y-scaling overemphasizing the noise. Since this is a binary problem (is it blurred or not), anything above 50% means it’s doing something, so a 90% success isn’t terrible. But I would expect validation to end up pretty close to training as it should be able to solve the problem in a general way. You might also benefit from looking at the class distribution of the bad classifications. If it’s all non-blurred images, maybe it’s because the images themselves are pretty monochrome or unfocused.

Ok I changed the Conv2D layer to be 10x10. I also changed the dense units to 64. Here is just a single run of that with a Confusion Matrix.

I don’t really see a bias towards non-blurred images.